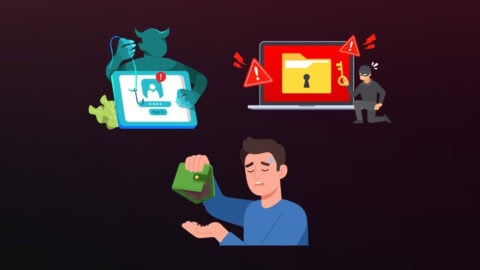

A few years ago, deepfakes were mostly a novelty. Celebrity face-swaps, political parody videos, the kind of thing that got shared around and forgotten by the next morning. Impressive, sure, but not exactly a boardroom concern.

That has changed. And it has changed faster than most people in financial services were prepared for.

Deepfakes are now a routine part of how fraud gets committed. Not occasionally, not experimentally routinely. The targets are not public figures anymore. They are KYC flows, onboarding screens, and the biometric checks that banks and fintechs put in place specifically to stop this kind of thing.

How Bad Have the Numbers Actually Gotten?

Deepfake detections worldwide quadrupled between 2023 and 2024. In Q1 2025 alone, there were more incidents logged than in the whole of 2024. US deepfake fraud losses hit $1.1 billion last year, up from $360 million the year before. Globally, the average company hit by deepfake fraud lost around $450,000 per incident.

What shifted is access. Building a convincing deepfake used to take genuine technical skill and hardware most fraudsters could not afford. Now there are free tools that do most of the work, and tutorial videos that walk through the rest. The craft has been commoditised, and the fraud volumes reflect that.

The Onboarding Flow Is the Target

Remote identity verification went mainstream during the pandemic and never went back. That was broadly a good thing; it made financial services more accessible and cut out a lot of friction for legitimate customers. But it also handed fraudsters a new attack surface to pick at.

The attack patterns worth knowing about:

- Face-swap deepfakes: A stolen identity’s face gets overlaid onto the fraudster’s own during a live selfie check. To the camera, it looks like a real person. It is not.

- Video injection: Instead of holding a deepfake up to the camera, the attacker skips the camera entirely, injecting a synthetic video stream directly into the verification pipeline via virtual camera software. The platform receives what looks like a legitimate live feed. It is not that either.

- Synthetic identities: AI-generated faces paired with fabricated or stolen personal data. These are not real people at all, but they can pass document checks if the verification layer is not built to catch them.

- Voice cloning: Mostly a call centre and social engineering problem for now, but increasingly relevant as voice authentication gets more widespread.

Half of all businesses globally reported dealing with audio or video deepfake fraud in 2024. Crypto platforms got hit hardest; incidents in that sector rose 654% year on year. Fintech and traditional financial services were not far behind.

Old Verification Methods Were Built for a Different Problem

Document fraud used to mean someone altering a physical ID with a razor blade and a photocopier. The countermeasures built to catch that, UV checks, hologram verification, and trained document reviewers, are not much use against a photorealistic synthetic passport generated in seconds by an AI model.

Selfie checks were supposed to be the answer. Take a photo of your face, match it against the ID. The logic was sound when the threat was someone trying to use a different person’s document. It stops making sense when the face in the selfie can be faked in real time.

Knowledge-based authentication, security questions, and memorable dates had already been eroding for years as personal data became easier to scrape. Deepfakes did not kill it, but they have made the case for moving past it a lot harder to argue against.

There is a particular issue with deepfake attacks that makes them worse than most fraud types: when one gets through, there is no alert. The system logs a successful verification. The account looks clean. The fraudster is inside, and nobody knows.

Detection tools tested in controlled environments against known deepfakes perform reasonably well. Against new attack techniques they have not been trained on, accuracy can drop by 50% or more. Fraudsters probe verification systems the same way penetration testers do, trying different approaches until something works, and they have the time and financial incentive to keep at it.

What Liveness Detection Is Actually Doing

The job of liveness detection is narrow but important: confirm that a real person is physically present in front of the camera, right now.

Active liveness checks prompt the user: blink, turn your head, smile. Harder to fake than a static photo, easier than passive methods to work around if you have real-time deepfake software running.

Passive liveness operates without any prompts. It watches the video feed for signals that synthetic media consistently gets wrong: micro-movements in the face, the way light reflects off skin at different angles, blood-flow-driven colour changes, depth and texture cues. These are not things a human reviewer would notice. They are things a well-trained model picks up on even when the deepfake looks visually perfect to the naked eye.

Better implementations combine both, passive analysis running continuously, with active prompts triggered when the confidence score drops below a threshold. Then a cross-check: does the face on the camera match the face on the ID document, and does the whole session look like a genuine live capture rather than a replay or a feed that has been tampered with?

Injection Attacks Are a Different Problem Entirely

Most of the public conversation about deepfake fraud focuses on face-swap attacks. Injection attacks get less attention, but they are arguably harder to defend against because they sidestep the camera altogether.

In a standard verification flow, the platform assumes the video it is receiving came from the user’s actual camera. Injection breaks that assumption; the attacker intercepts the video pipeline before it reaches the platform and substitutes a synthetic stream. The platform never sees a real camera feed at all. It sees something that looks exactly like one.

Camera-based liveness detection, no matter how good, does not help here. The platform is being fed a signal that has already been compromised upstream.

Defending against injection means checking things that have nothing to do with the video content itself, whether the device has virtual camera software installed, whether the session metadata looks consistent with a genuine device capture, and whether there are signals at the OS or hardware level that suggest something has been inserted into the pipeline. It is a different technical problem, and a lot of platforms are not set up to handle it yet.

Putting Together a Defence That Actually Holds

No single layer makes a verification stack deepfake-proof. These things only work in combination:

- ISO/IEC 30107-3 Level 2 certified liveness, independently tested against presentation attacks, not just self-assessed.

- Injection attack detection at the device and session level, separate from video content analysis.

- Document-to-face matching that runs in real time against the live capture, not just against a stored selfie.

- Continuously updated detection models, if the AI is not being retrained on new attack methods regularly, it is falling behind.

- Cross-session fraud signals, repeat attempts from the same device, unusual metadata patterns, and behavioural signals that suggest someone’s probing the system rather than trying to open an account.

Regulators are starting to make some of this mandatory rather than optional. FATF’s digital identity guidance calls out presentation attack detection specifically. eIDAS 2.0 sets explicit requirements for liveness and injection resistance at high assurance levels. The direction of travel is clear, and firms still running verification infrastructure that was designed for a pre-deepfake threat environment are going to find the next round of audits uncomfortable.

Conclusion

Fraud losses matter, obviously. But deepfake fraud has a second-order consequence that is harder to put a number on. Every financial institution’s relationship with its customers rests on the assumption that verification works to ensure that the person on the other side of the account is who they say they are.

Liveness detection, done well, is the most direct technical response available right now. It will not solve the problem permanently, the attack methods will keep evolving, and the defenders will have to keep pace. But it is the checkpoint that everything else in a remote verification flow depends on. If it fails, the rest of the stack does not matter much.