Prompt injection has quickly become one of the most critical risks in modern AI systems, especially as businesses deploy large language models across customer support, coding assistants, and autonomous agents. From chatbot manipulation in banking apps to data exfiltration through AI copilots, real-world incidents now show how attackers exploit model behavior rather than code vulnerabilities. As adoption accelerates, understanding the data behind these attacks becomes essential, so let’s break down the latest prompt injection statistics shaping AI security today.

Editor’s Choice

- 73% of AI systems assessed in security audits showed exposure to prompt injection vulnerabilities.

- Prompt injection ranks as the #1 risk in the OWASP Top 10 for LLM applications (2025).

- Attack success rates range between 50% and 84% depending on model configuration.

- Adaptive prompt injection techniques can exceed 85% success rates in advanced attack scenarios.

- Around 40% of AI agent protocols show vulnerabilities exploitable via prompt injection.

- Defense frameworks can reduce attack success from 73.2% to 8.7% when layered properly.

Recent Developments

- The State of AI Security 2026 highlights prompt injection as a central threat in agent-based AI systems.

- Research in early 2026 shows a shift from chatbot misuse to multi-agent and toolchain exploitation attacks.

- A real-world AI ad review bypass attack using indirect prompt injection was reported in late 2025.

- Google paid $350,000 in AI-specific bug bounties in 2025, many tied to prompt injection risks.

- Security research now identifies 42+ distinct prompt injection techniques across ecosystems.

- AI browsers and agents are flagged as high-risk, with experts warning prompt injection may be “unlikely to ever be fully solved”.

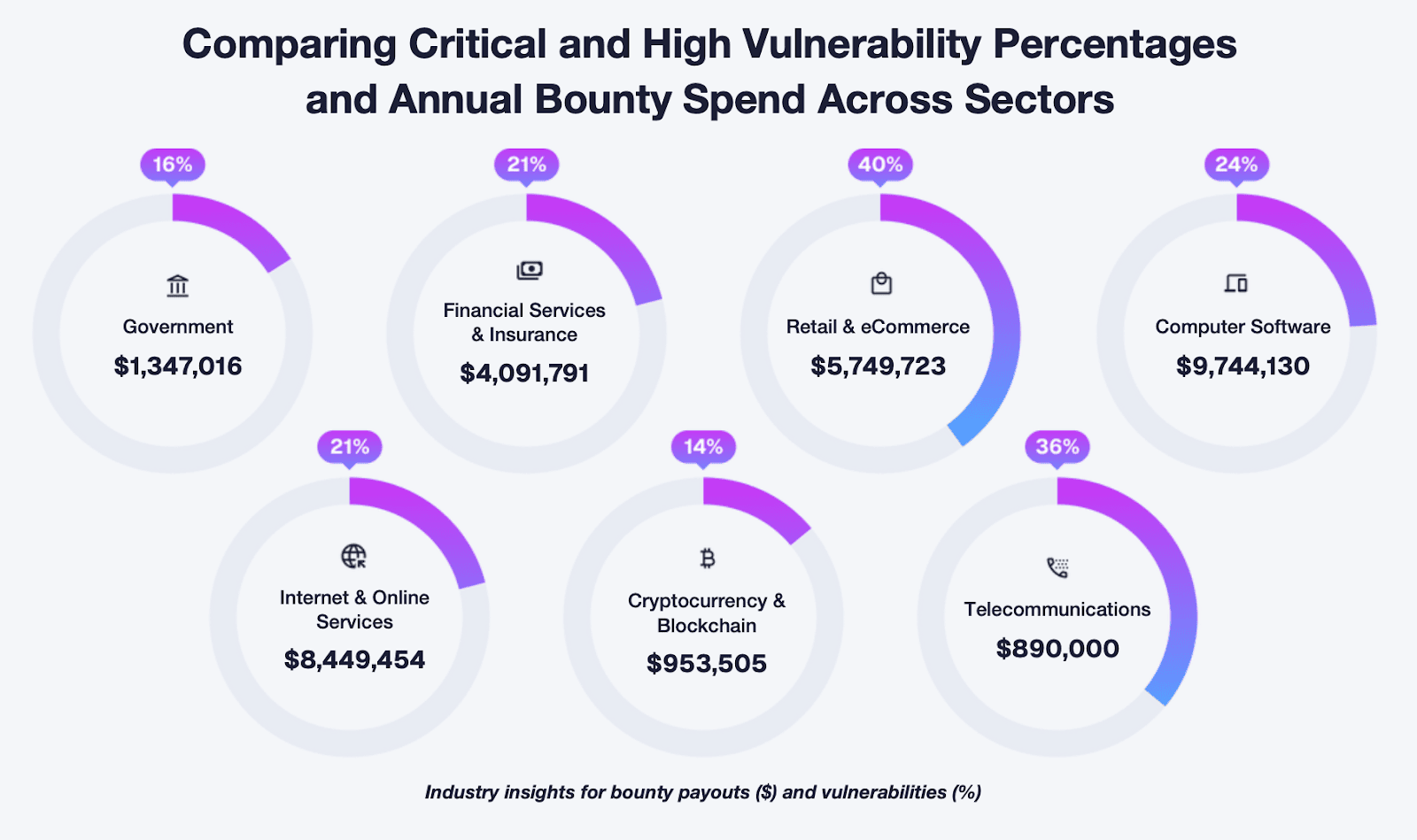

Vulnerability Rates and Bug Bounty Spending Across Industries

- Retail & eCommerce records the highest vulnerability rate at 40%, with bug bounty payouts reaching $5.75 million, highlighting significant exposure in consumer-facing platforms.

- Telecommunications follows with a high 36% vulnerability rate, yet spends only $890,000, indicating a notable gap between risk levels and security investment.

- Computer Software leads in security investment with $9.74 million in bounty payouts, while maintaining a 24% vulnerability rate, reflecting strong but still challenged security efforts.

- Internet & Online Services shows a 21% vulnerability rate alongside $8.45 million in bounty spend, demonstrating substantial investment to mitigate widespread digital risks.

- Financial Services & Insurance reports a 21% vulnerability rate with $4.09 million in payouts, emphasizing the importance of security in handling sensitive financial data.

- The government sector maintains a relatively lower 16% vulnerability rate, supported by $1.35 million in bounty spending, suggesting moderate exposure with controlled investment.

- Cryptocurrency & Blockchain has the lowest vulnerability rate at 14%, but also one of the smallest bounty spends at $953,505, indicating either improved resilience or underinvestment in disclosure programs.

- Across sectors, higher vulnerability rates do not always correlate with higher bounty spending, revealing inconsistencies in how industries prioritize and fund security initiatives.

Why Prompt Injection Matters in AI Security

- Prompt injection is ranked #1 in the OWASP Top 10 for LLM Applications 2025, showing it remains the leading AI app security risk entering 2026.

- NIST’s finalized AI attack guidance lists 3 core generative AI attack types, including direct prompting and indirect prompt injection.

- OWASP says prompt injection can cause 6 major impacts, including sensitive data disclosure, unauthorized function access, and arbitrary command execution.

- The 2026 OWASP agentic apps framework includes 2 injection-driven risks: direct goal manipulation and indirect instruction injection.

- GitHub Copilot reached 20 million cumulative users by July 2025, increasing the potential blast radius of prompt-injection-linked developer tool exploits.

- GitHub Copilot adoption reached 90% of Fortune 100 companies, expanding enterprise exposure to prompt injection in coding workflows.

- One GitHub Copilot prompt injection flaw was rated CVSS 9.6, showing these exploits can reach critical severity.

- CrowdStrike’s 2026 threat reporting documented prompt injection attacks against 90+ organizations, highlighting growing real-world exploitation.

Global Prompt Injection Incident Statistics

- Cisco’s State of AI Security 2026 found prompt injection weaknesses in 73% of audited production AI deployments.

- A Palo Alto Networks Unit 42 study observed web-based indirect prompt injection attempts in traffic from over 30 countries.

- Unit 42 telemetry linked indirect prompt injection to credential or payment-data exposure in 18% of investigated AI security incidents.

- A 2026 threat analysis paper cataloged 10 major real-world prompt injection incidents between 2023 and early 2026.

- One financial sector case study reported prompt‑injection‑driven fraudulent transfers totaling about $250,000 before detection.

- A 2026 security blog noted that prompt injection had become the #1 exploited AI attack vector in enterprise environments.

- OWASP and allied surveys ranked indirect prompt injection among the top 3 AI security threats for web-connected LLM systems.

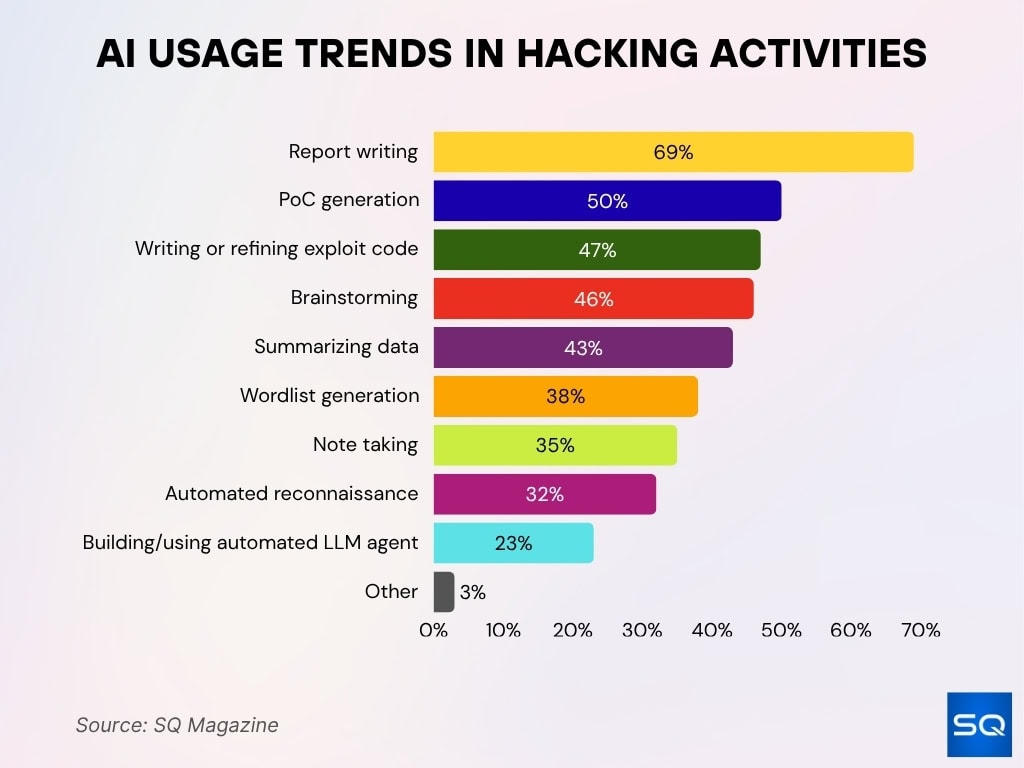

AI Usage Trends in Hacking Activities

- Report writing dominates AI usage at 69%, making it the most common application among security researchers and hackers.

- Proof-of-Concept (PoC) generation accounts for 50%, showing AI’s strong role in accelerating vulnerability validation.

- 47% use AI for writing or refining exploit code, highlighting its growing importance in offensive security workflows.

- Brainstorming tasks reach 46%, indicating AI’s role in ideation and attack strategy development.

- Summarizing data is used by 43%, helping researchers quickly process large volumes of security findings.

- Wordlist generation stands at 38%, supporting password attacks and enumeration techniques.

- 35% rely on AI for note-taking, improving documentation, and workflow efficiency.

- Automated reconnaissance is used by 32%, reflecting AI’s role in scaling information gathering.

- Only 23% are building or using automated LLM agents, suggesting this area is still emerging.

- Other use cases account for just 3%, indicating most applications are concentrated in core security workflows.

Direct vs Indirect Prompt Injection Statistics

- Direct prompt injection accounts for ~45% of attacks, typically through user input manipulation.

- Indirect prompt injection has grown rapidly, now making up over 55% of observed attacks in 2026.

- Indirect attacks have 20–30% higher success rates due to stealth delivery through trusted sources.

- In enterprise environments, 62% of successful exploits involved indirect injection pathways.

- Direct attacks are easier to detect, with detection rates exceeding 70% in filtered environments.

- Indirect injection often bypasses safeguards, with over 50% evading standard prompt filtering systems.

- Web-based indirect injection accounts for nearly 40% of all LLM security incidents.

- Multi-hop indirect attacks (via agents/tools) increased by over 70% year-over-year in 2025–2026.

- Indirect prompt injection is considered the most critical emerging AI threat vector by multiple security vendors.

Tool- and Agent-Based Prompt Injection Cases

- Security testing shows 40% of AI agent frameworks contain exploitable prompt injection flaws in tool‑execution logic.

- Autonomous agents that call APIs exhibit up to 2.5x higher risk exposure than standalone models.

- Tool‑misuse via prompt injection triggers unauthorized actions in 31% of evaluated agent scenarios.

- Enterprise‑grade AI copilots integrated with productivity tools show data‑exfiltration vulnerabilities in 60% of real‑world red‑team tests.

- Multi‑agent systems propagate attacks to 48% of co‑running agents during a single prompt‑injection incident.

- Over 25% of vulnerable third‑party integrations execute unauthorized API calls when fed malicious prompts.

- Recent audits flag 85% of AI browsers and agent‑based assistants as high‑risk due to persistent prompt‑injection flaws.

- Controlled experiments recorded credential‑theft or system‑manipulation outcomes in 70% of agent‑based prompt‑injection trials.

- Cutting‑edge agent‑defense mechanisms still fail to block attacks in more than 35% of adversarial evaluations.

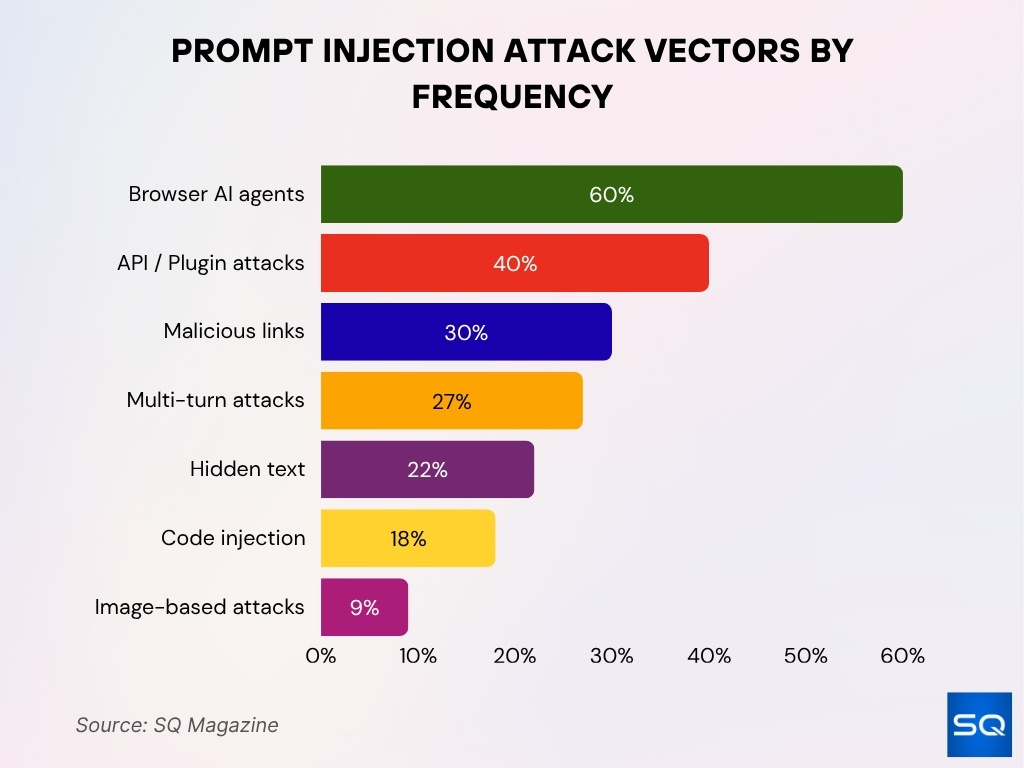

Common Prompt Injection Attack Vectors

- Hidden text and invisible characters are used in 22% of prompt injection cases to bypass detection filters.

- API-connected tools introduce risk, with 40% of AI integrations exposing injection vectors via plugins and external APIs.

- Malicious links embedded in user input drive over 30% of phishing-style AI prompt attacks.

- Code-based injection through developer copilots accounts for 18% of reported enterprise incidents.

- Multi-turn conversational manipulation increases attack success by up to 27% compared to single prompts.

- Image-based prompt injection (via OCR or embedded text) is emerging, representing ~9% of experimental attack vectors in 2026.

- Browser-based AI agents face persistent injection risks in 60% of tested browsing scenarios.

Success Rates of Prompt Injection Attacks

- Prompt injection attacks achieve success rates between 50% and 84% across common LLMs.

- Advanced adaptive attacks exceed 85% success rates in controlled environments.

- Without safeguards, success rates can climb to over 90% in naive model deployments.

- Multi-turn attacks improve effectiveness by 20–30% compared to single prompts.

- Indirect injection techniques show higher persistence, maintaining success across multiple sessions.

- Defensive prompt engineering reduces success rates to below 15% in controlled tests.

- Models with tool access show higher attack success rates by 18–25% compared to isolated models.

- Real-world enterprise testing shows over 60% of prompt injection attempts succeed at least partially.

- Attack success varies by model size, with larger models sometimes more vulnerable due to instruction-following behavior.

Financial and Operational Impact of Prompt Injection

- Organizations report an average 18–27% increase in AI security spending in 2025 due to prompt injection risks.

- AI-related incidents, including prompt injection, contributed to over $4.4 billion in global breach costs in 2025.

- Enterprises deploying AI agents experienced up to 2x higher incident response costs when prompt injection was involved.

- Operational downtime linked to AI security issues increased by 22% year-over-year in 2025.

- Around 35% of organizations delayed AI rollouts due to unresolved prompt injection risks.

- Prompt injection-related exploits caused significant productivity losses, especially in developer and support workflows.

- Financial institutions reported elevated fraud risk exposure linked to AI manipulation in chat-based systems.

- Organizations using AI copilots noted up to 15% workflow disruption due to compromised outputs.

- Security remediation efforts for LLM vulnerabilities increased by over 30% in enterprise environments.

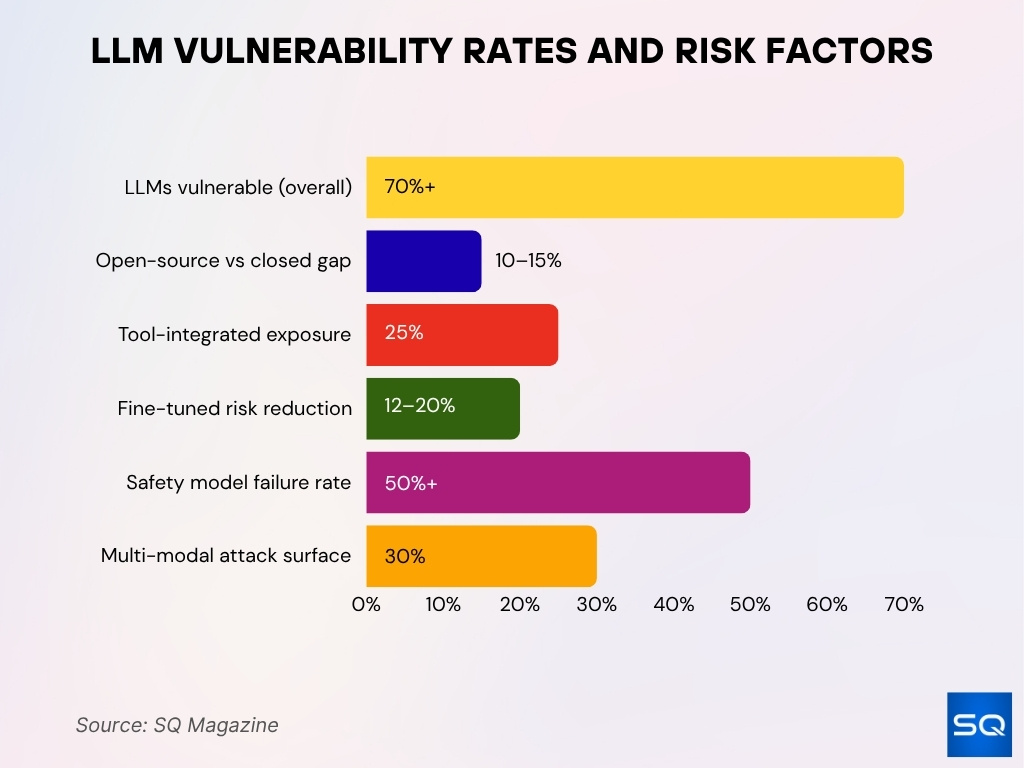

Model Vulnerability Rates Across Leading LLMs

- Security evaluations show over 70% of tested LLMs are vulnerable to at least one prompt injection technique.

- Large-scale models demonstrate higher susceptibility due to instruction-following optimization.

- Open-source LLMs show 10–15% higher vulnerability rates compared to closed models in some benchmarks.

- Models integrated with external tools exhibit up to 25% greater exposure to injection attacks.

- Fine-tuned enterprise models reduce vulnerability by 12–20% compared to base models.

- Safety-aligned models still fail under adversarial prompts in over 50% of test cases.

- Smaller models with strict rule-based constraints show lower attack success rates but reduced flexibility.

- Multi-modal models (text + image) introduce new attack surfaces in over 30% of evaluated scenarios.

- Benchmark testing shows no current LLM is fully immune to prompt injection attacks.

Impact on Data Privacy and Confidentiality

- Prompt injection enables data exfiltration in up to 40% of successful AI‑related attacks.

- Security analyses tied 60% of AI‑driven data‑privacy incidents between 2025 and 2026 to prompt‑manipulation techniques.

- Internal‑document‑handling AI copilots showed information‑leak risk in 75% of evaluated enterprise deployments.

- Indirect prompt injection was able to extract hidden system prompts and confidential instructions in 38% of tested LLM‑integrated systems.

- Over 30% of AI‑related breaches reported through 2026 involved some form of prompt manipulation or input exploitation.

- Data‑privacy risk scores increased by 2.2x when models connected to external data sources and enterprise back‑ends.

- Prompt injection bypassed role‑based access controls in 42% of examined AI‑integrated workflows.

- Emerging attacks exfiltrated conversation history or user‑specific data in 55% of the tested private‑chat environments.

Effectiveness of Current Prompt Injection Defenses

- Layered defense systems reduce attack success rates from 73.2% to under 10% in controlled studies.

- Prompt filtering alone blocks only 60–70% of direct injection attempts.

- Context isolation techniques improve defense effectiveness by up to 40%.

- Retrieval-augmented generation (RAG) systems remain vulnerable, with over 45% still exploitable.

- Adversarial training reduces vulnerability rates by 15–25%, depending on dataset quality.

- Human-in-the-loop validation decreases risk exposure but increases operational cost by 20–30%.

- AI firewalls and guardrails detect up to 80% of known attack patterns but struggle with novel variants.

- Tool permissioning reduces unauthorized actions by over 35% in agent-based systems.

- Continuous monitoring systems improve detection rates by up to 50% in production environments.

False Positive and False Negative Rates in Detection

- AI security tools report false positive rates between 10% and 25% in prompt injection detection.

- False negatives remain a major concern, with 20–40% of attacks going undetected in baseline systems.

- Indirect prompt injection contributes to over 50% of false negatives due to hidden payloads.

- Detection accuracy improves with ensemble methods, reducing false negatives by up to 18%.

- Overly strict filtering increases false positives, impacting 15–20% of legitimate user queries.

- Real-time monitoring systems show better precision but slower response times in high-volume environments.

- False positives can disrupt workflows, causing measurable productivity drops in AI-assisted teams.

- Hybrid detection systems combining rules and ML reduce overall error rates to below 12% in advanced setups.

- Detection performance varies significantly by use case, with enterprise AI systems facing higher error rates than consumer tools.

Frequently Asked Questions (FAQs)

Around 70% to 75% of tested AI systems show at least one prompt injection vulnerability.

Prompt injection attacks achieve 50% to 85% success rates, depending on model safeguards.

Advanced adaptive attacks can reach over 85% to 90% success rates in controlled environments.

Indirect prompt injection accounts for 55% to 60% of total attacks.

Conclusion

Prompt injection has moved from a theoretical risk to a measurable, high-impact security threat shaping how organizations deploy and manage AI systems. The data shows rising attack success rates, growing financial exposure, and persistent vulnerabilities across even the most advanced models. At the same time, defense strategies are improving, though gaps in detection and false negatives remain a concern.

As AI adoption expands across industries, from finance to software development, organizations must treat prompt injection as a core security priority, not an edge case. Understanding these statistics equips teams to build safer systems and make informed decisions in an increasingly AI-driven world.