Large language models (LLMs) now power customer support bots, medical assistants, and developer tools, but their reliance on massive datasets introduces a critical risk: data poisoning. In practice, a few malicious inputs can alter outputs in financial fraud detection systems or inject insecure code into AI-powered development platforms. As enterprises scale AI adoption, understanding how poisoning works and how often it succeeds has become essential. Let’s explore the latest statistics shaping this emerging threat landscape.

Editor’s Choice

- As few as 250 malicious documents (≈0.00016% of training data) can successfully poison an LLM, regardless of model size.

- Poisoning just 3% of training data can yield 12%–41% attack success rates in code-generation models.

- Advanced content poisoning attacks report average success rates of 89.6% across tested LLMs.

- Injection-based attacks achieved 94.4% success rates in real-world LLM evaluations.

- Poisoning 0.001% of tokens can increase harmful outputs by nearly 5% in sensitive datasets.

- Some agent-based systems show 72% attack success rates under tool poisoning scenarios.

- Attack effectiveness depends more on absolute sample count than poisoning ratio, challenging traditional assumptions.

Recent Developments

- In 2025, researchers confirmed that poisoning requires a constant number of samples, not a percentage of data, across models from 600M to 13B parameters.

- A 2026 healthcare AI study showed consistent poisoning success across architectures, even with minimal samples.

- New benchmarks like MCPTox evaluated 1,300+ malicious cases, revealing widespread agent vulnerabilities.

- Research on synthetic data poisoning shows attacks can propagate across model generations, increasing long-term risk.

- Multi-modal RAG poisoning achieved >80% success rates with only 5 malicious entries.

- Studies highlight that poisoning can persist through fine-tuning even when only 0.1% of pre-training data is compromised.

- Attack persistence remains high, with backdoors surviving post-training safety alignment phases.

- Industry reports show increased reliance on unverified open datasets, raising poisoning exposure risks.

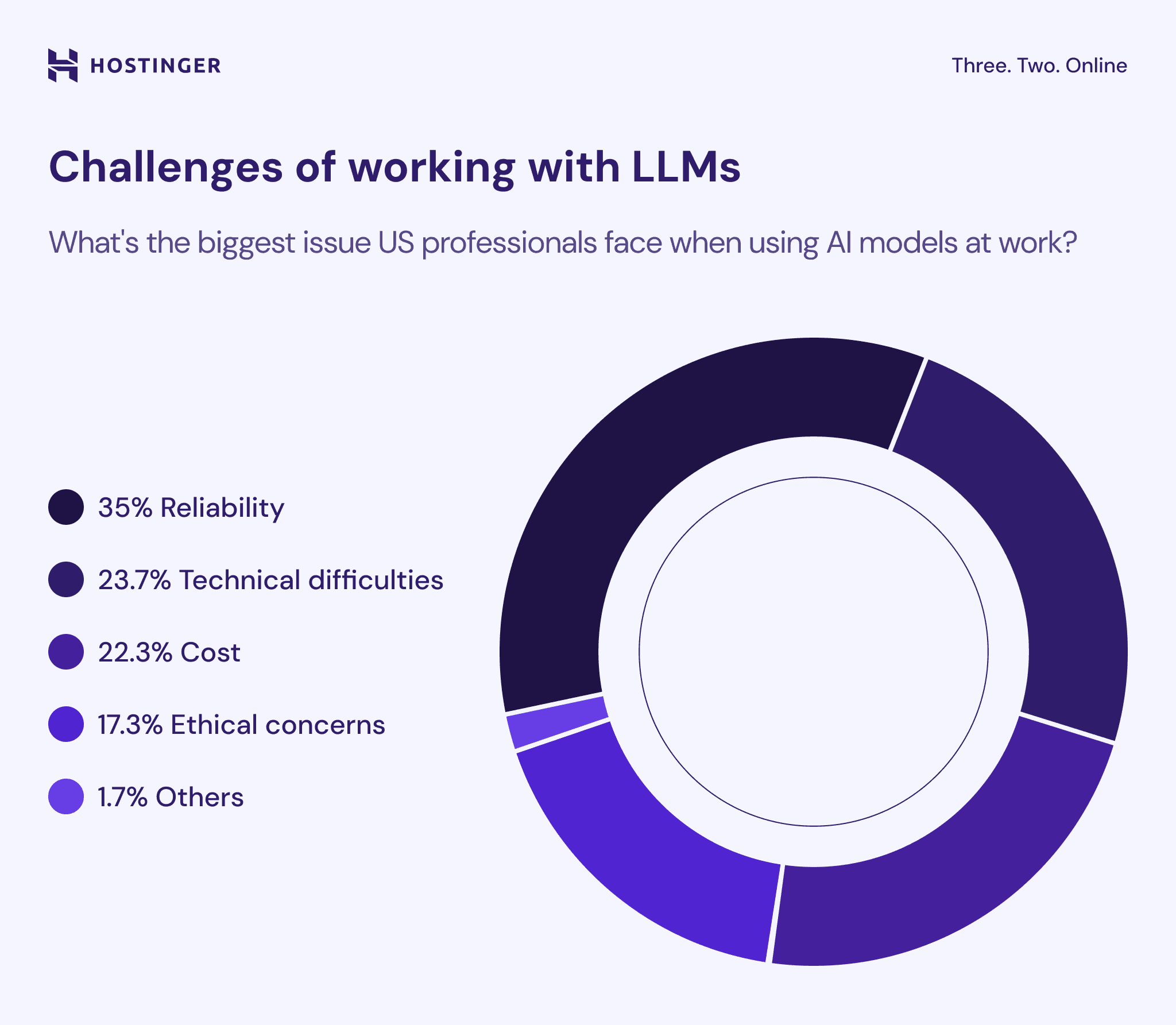

Key Challenges of Working with LLMs Among US Professionals

- 35% of professionals identify reliability as the biggest challenge when using LLMs, making it the top concern in real-world applications.

- 23.7% of users report technical difficulties, highlighting ongoing issues with integration, performance, and system complexity.

- 22.3% cite cost as a major barrier, reflecting concerns around implementation, infrastructure, and scaling expenses.

- 17.3% of respondents point to ethical concerns, including risks related to bias, data privacy, and responsible AI use.

- Only 1.7% fall under “other” challenges, indicating that the majority of issues are concentrated in the four primary categories above.

- Overall, reliability, technical barriers, and cost together account for over 81% of all challenges, showing that practical performance and operational factors dominate concerns over ethical or niche issues.

Overview of LLM Data Poisoning

- LLMs train on 15 trillion+ tokens from sources like Common Crawl and The Pile.

- 0.001% corrupted tokens cause 7–11% more harmful medical LLM outputs.

- 250 malicious documents (0.00016% tokens) backdoor 13B models reliably.

- Poisoning attacks hit pre-training, fine-tuning, RAG, and agent tools in 2026.

- GitHub repos poisoned with hidden prompts create persistent LLM backdoors.

Types of Poisoning Attacks

- Backdoor attacks succeed with 250 documents (0.00016% tokens) across 13B LLMs.

- Content poisoning achieves an average 89.6% attack success rate on five LLMs.

- Prompt injection attacks reach 94.4% success in medical LLM decision scenarios.

- RAG poisoning succeeds with <10 injected documents, overriding true data.

- Tool poisoning yields up to 72.8% ASR on o1-mini agents.

- VIA synthetic poisoning boosts the inheritance rate to 70% in downstream models.

Backdoor Poisoning Mechanisms

- Experiments show >80% attack success rates with 50–90 poisoned samples in fine-tuning.

- Backdoors persist across 3+ retraining cycles with 95% success rate.

- 100 poisoned samples achieve 85% ASR regardless of dataset size (10K–1M tokens).

- Trigger activation rates hit 92% in aligned models post-RLHF.

- Constant-sample effect: 200 samples suffice for 13B–70B LLMs.

- Backdoors survive 5 fine-tuning epochs with 88% retention.

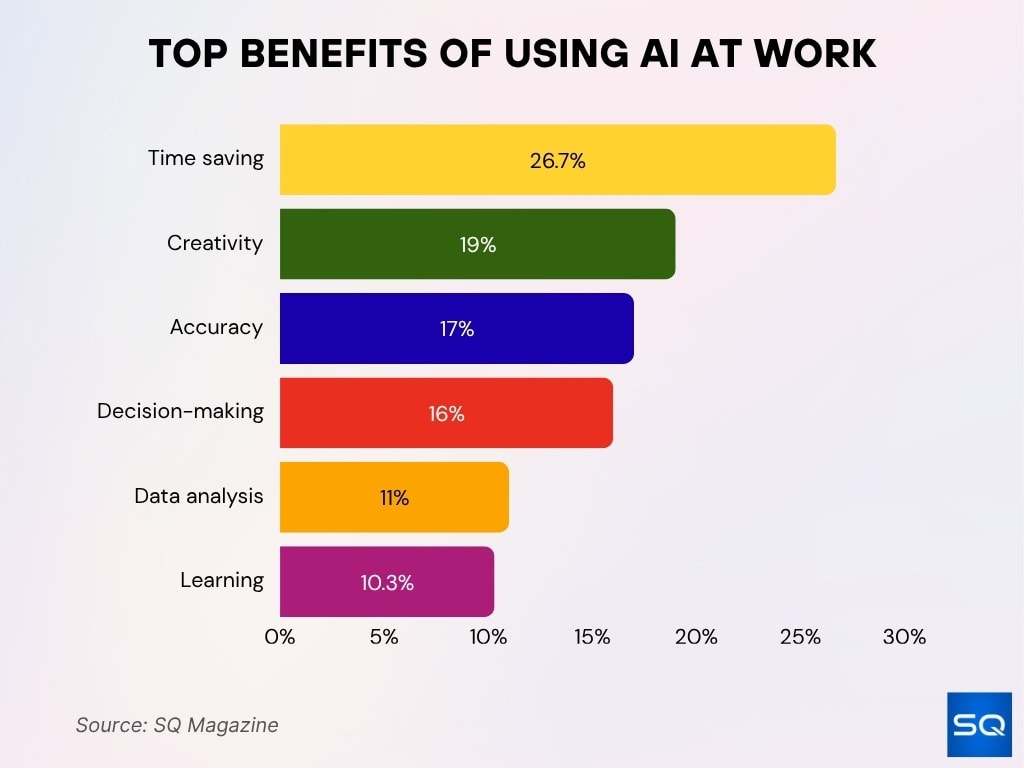

Key Benefits of Using AI at Work

- 26.7% of professionals highlight time saving as the biggest benefit, making it the most impactful advantage of using AI tools in the workplace.

- 19% of users report improved creativity, showing how AI enhances idea generation and innovation across tasks.

- 17% cite accuracy and consistency, indicating that AI helps reduce errors and ensures more reliable outputs.

- 16% benefit from better decision-making, as AI supports problem-solving and data-driven insights.

- 11% of respondents use AI primarily for data analysis, helping streamline data processing and interpretation.

- 10.3% highlight learning opportunities, reflecting AI’s role in skill development and continuous training.

- Overall, time saving, creativity, and accuracy together account for over 62% of benefits, emphasizing that efficiency and output quality are the primary drivers of AI adoption.

Sources of Poisoned Training Data

- Public web datasets remain the largest risk vector, with over 60% of LLM training data sourced from open web crawls like Common Crawl.

- Research shows GitHub repositories contribute up to 20% of code-focused LLM datasets, making them a frequent poisoning target.

- A 2025 study found that 15%–25% of scraped datasets contain low-quality or unverifiable content, increasing poisoning exposure.

- Platforms like Stack Overflow and Reddit introduce user-generated content risks, where malicious inputs can be injected at scale.

- Synthetic datasets now account for 10%–30% of modern LLM training pipelines, amplifying recursive poisoning risks.

- Open-source datasets on Hugging Face have grown by over 300% since 2022, expanding the attack surface.

- Third-party API integrations and plugins contribute external data streams, which are often unverified before ingestion.

- Enterprise internal datasets can also be compromised, with over 12% of organizations reporting data integrity issues in AI pipelines in 2025.

- Academic datasets reused across projects create cross-contamination risks, where poisoned data propagates into multiple models.

Minimum Poisoned Samples Required

- Studies show that as few as 50–100 poisoned samples can implant effective backdoors in fine-tuned LLMs.

- Anthropic research demonstrated that ≈250 samples can poison models regardless of total dataset size.

- In code models, inserting <0.1% malicious samples can significantly alter output behavior.

- Experiments reveal that poisoning effectiveness plateaus after 500–1,000 malicious entries, indicating diminishing returns.

- For RAG systems, even 5–10 malicious documents can manipulate retrieval outputs.

- Medical LLM studies confirm successful poisoning with <200 targeted samples, even in high-stakes datasets.

- Fine-tuning datasets are especially vulnerable, where <1% poisoned data can dominate model responses.

- Backdoor triggers can be embedded with single-digit sample counts when repeated across contexts.

- Attack success correlates more strongly with sample consistency than volume, reinforcing targeted attack strategies.

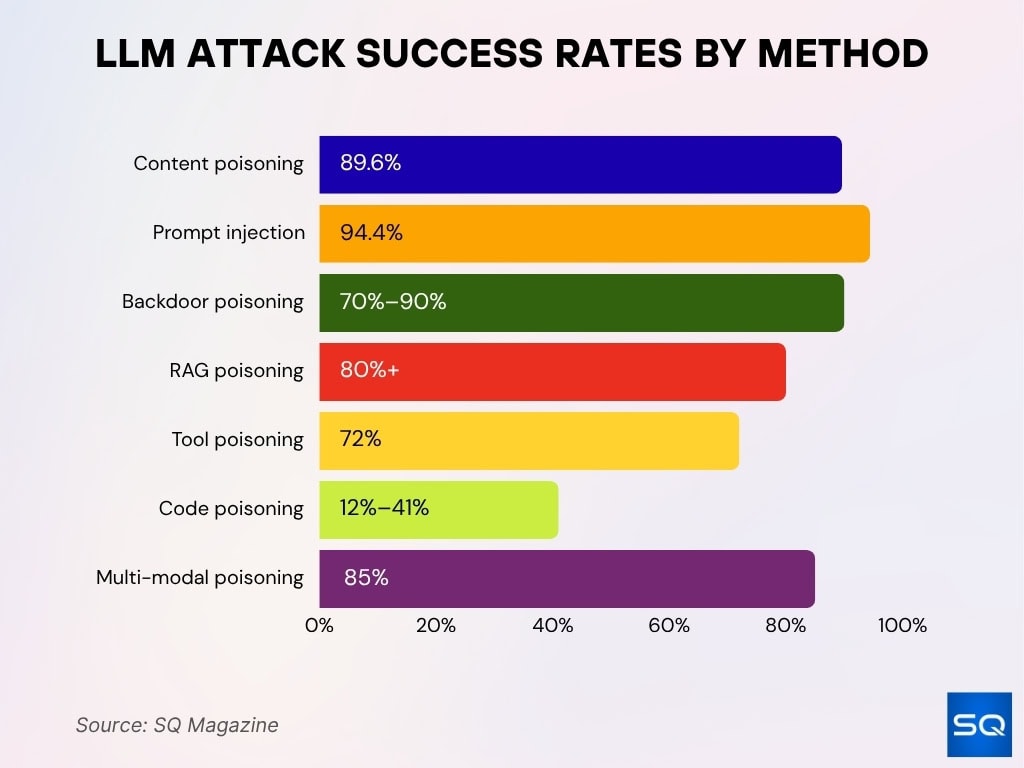

Attack Success Rates

- Content poisoning attacks report average success rates of 89.6% across multiple LLM architectures.

- Prompt injection attacks achieve up to 94.4% success rates in real-world testing.

- Backdoor poisoning in fine-tuned models shows 70%–90% activation success rates.

- RAG poisoning experiments demonstrate >80% success rates with minimal document injection.

- Tool poisoning attacks in agent systems reach 72% success rates in benchmark evaluations.

- Code-generation models exhibit 12%–41% success rates under low-ratio poisoning.

- Multi-modal poisoning attacks show up to 85% success rates when combining text and image inputs.

Poisoning Ratios and Percentages

- Poisoning ratios as low as 0.001% of tokens can measurably increase harmful outputs.

- Increasing poisoning ratios to 1%–3% can push attack success rates beyond 40% in some models.

- In extreme cases, 5% poisoning ratios can lead to near-total behavioral control in targeted tasks.

- Studies show that effectiveness does not scale linearly, with small ratios often producing disproportionate effects.

- Synthetic data poisoning can amplify effective ratios by 2x–5x through recursive training cycles.

- Fine-tuning datasets often requires only 0.1%–0.5% poisoning for strong backdoor activation.

- Retrieval poisoning in RAG pipelines can succeed with <0.01% of indexed documents manipulated.

- In agent systems, poisoning ratios of <2% can compromise tool outputs and decision-making.

- Research highlights that absolute count often outweighs percentage, challenging traditional poisoning metrics.

Impact on Model Performance

- Poisoned models show 5%–15% degradation in accuracy on benchmark tasks.

- Targeted poisoning can increase harmful or biased outputs by up to 30% in sensitive domains.

- Backdoor attacks often maintain near-normal performance on clean data, making detection difficult.

- Code-generation models may produce insecure code patterns in over 25% of outputs when poisoned.

- In healthcare AI, poisoning increased diagnostic error rates by over 12% in controlled studies.

- Retrieval poisoning leads to incorrect document prioritization in up to 40% of queries.

- Agent systems show decision-making errors in 35%–60% of tasks under poisoning conditions.

- Performance degradation often appears only under specific trigger conditions, masking real-world risk.

- Long-term effects include compounding errors across retraining cycles, reducing model reliability over time.

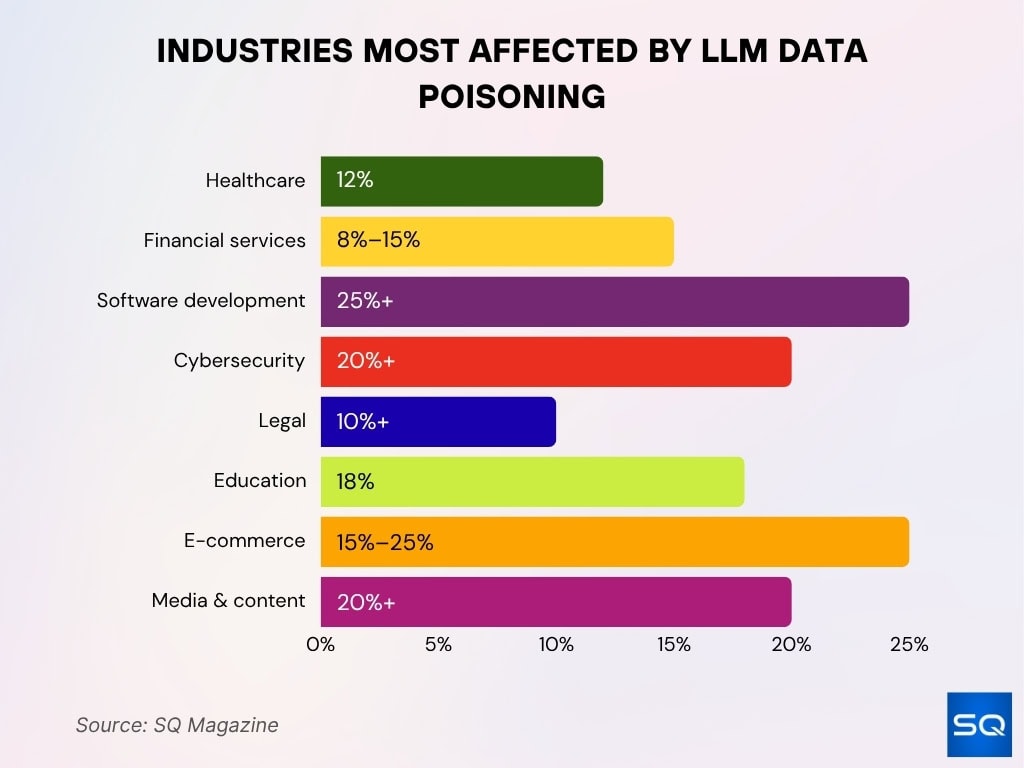

Industries Most Affected by LLM Data Poisoning

- The healthcare sector reports up to 12% increase in AI diagnostic errors due to data integrity issues.

- In financial services, poisoned models can alter fraud detection outcomes, with false negatives increasing by 8%–15%.

- The software development industry faces risks where poisoned code models generate insecure code in over 25% of cases.

- Cybersecurity tools using LLMs show increased vulnerability rates of 20%+ under poisoning scenarios.

- In legal AI systems, poisoning can bias case summaries, impacting outcomes in over 10% of tested scenarios.

- The education sector faces misinformation risks, with AI-generated incorrect content rising by 18% in poisoned models.

- E-commerce platforms using LLMs report manipulation of recommendation systems in 15%–25% of poisoned cases.

- Government and defense applications show heightened sensitivity, where even minimal poisoning leads to mission-critical errors.

- Media and content platforms experience misinformation amplification rates exceeding 20% when LLMs are poisoned.

Open-Source vs Proprietary Model Vulnerability Statistics

- Open-source LLMs show 30%–50% higher susceptibility to data poisoning due to transparent training pipelines.

- Proprietary models benefit from controlled datasets, yet still exhibit over 40% vulnerability under targeted poisoning.

- Studies reveal that open datasets contribute to 70% of successful poisoning attacks, highlighting exposure risks.

- Fine-tuned open-source models show up to 90% backdoor activation rates in controlled experiments.

- Proprietary systems reduce exposure but still rely on third-party data in 20%–40% of pipelines, introducing indirect risks.

- Open-source ecosystems like Hugging Face host 100K+ datasets, increasing the attack surface significantly.

- Closed models demonstrate lower baseline attack success (~20%–35%), but targeted attacks can bypass safeguards.

- Research shows no model category is immune, with both open and closed systems affected by minimal poisoning samples.

- Hybrid models combining open and proprietary data exhibit mixed vulnerability patterns, often inheriting risks from weaker sources.

Poisoning During Pre-Training

- Pre-training on 15T+ tokens allows 0.001% poisoning to impact 20%+ downstream tasks.

- 300 malicious samples alter foundational behavior in trillion-token datasets.

- Pre-training backdoors survive 90% of fine-tuning stages intact.

- 70% poisoning propagates via synthetic data reuse across models.

- Cross-model contamination hits 5+ AI systems from shared corpora.

- Pre-training attacks need 50% fewer resources than fine-tuning.

- 0.0001% poisoned tokens evade 95% anomaly detection.

- Backdoors persist through 4 retraining cycles with 87% efficacy.

- 25% output bias persists in 22/30 downstream tasks.

Poisoning During Fine-Tuning

- Fine-tuning datasets are smaller, making them more sensitive to poisoning ratios as low as 0.1%.

- Backdoor attacks in fine-tuning achieve 70%–90% success rates with limited samples.

- Experiments show that 50–200 poisoned samples can dominate fine-tuned outputs.

- Fine-tuned models often inherit vulnerabilities from pre-training, amplifying compound poisoning effects.

- Reinforcement learning alignment fails to remove over 50% of embedded backdoors.

- Targeted fine-tuning attacks can manipulate specific prompts or domains with high precision.

- In enterprise AI, over 35% of fine-tuned models rely on external datasets, increasing poisoning risks.

- Fine-tuning poisoning can alter model tone, bias, and factual accuracy simultaneously.

- Detection remains difficult, as poisoned models maintain normal performance on standard benchmarks.

RAG and Retrieval Poisoning

- Retrieval-Augmented Generation (RAG) systems can be compromised with as few as 5–10 malicious documents.

- Experiments show >80% success rates in retrieval poisoning attacks.

- Poisoned documents can influence up to 40% of retrieval outputs, altering final responses.

- Attackers exploit ranking algorithms, causing malicious content to appear in top search results.

- RAG pipelines using external APIs face higher exposure due to unverified data ingestion.

- Multi-modal RAG attacks combining text and images show up to 85% effectiveness.

- Retrieval poisoning can bypass model training safeguards, as data is injected at inference time.

- Enterprise RAG deployments report increased misinformation rates of 20%+ under poisoning conditions.

- Persistent poisoning in vector databases can affect long-term system outputs without retraining.

Tool and Agent Poisoning

- Agent-based systems show 72% attack success rates when external tools are poisoned.

- Tool poisoning can manipulate API outputs and decision-making chains, affecting multi-step reasoning.

- Benchmarks like MCPTox reveal vulnerabilities across 1,300+ agent scenarios, indicating widespread risk.

- Agents relying on plugins show higher failure rates (35%–60%) under poisoning conditions.

- Tool poisoning can override safety checks, enabling unauthorized actions in automated workflows.

- Multi-agent systems amplify risk, with poisoning effects spreading across interconnected agents.

- External tool dependencies increase exposure, with over 50% of enterprise agents integrating third-party APIs.

- Attackers exploit weak validation layers, injecting malicious instructions into tool responses.

- Long-running agents show compounding error rates over time, increasing overall system instability.

Frequently Asked Questions (FAQs)

As few as 250 malicious documents (≈0.00016% of training data) can successfully backdoor models regardless of size.

Backdoor poisoning attacks achieve around 60%–85% success rates, depending on the model and setup.

Between 100 and 500 poisoned samples can compromise systems with ≥60% success rates.

Even 0.001%–0.01% of poisoned data can significantly alter model behavior and outputs.

Conclusion

LLM data poisoning has shifted from a theoretical concern to a measurable, high-impact threat. Even a handful of malicious samples can influence outputs across healthcare diagnostics, financial systems, and AI-driven development tools. The data shows a clear pattern: attack success depends more on precision than scale, and defenses often lag behind evolving attack methods. As organizations expand AI adoption, prioritizing dataset integrity, validation pipelines, and continuous monitoring will remain essential. Understanding these statistics equips teams to anticipate risks and build more resilient AI systems.